SpeakEasy

29 January 2026

A tool that checks content against readability standards and creates clear, plain-language versions.

The problem SpeakEasy addresses

Government website content is often hard to read

Government websites have become faster, more accessible, and more reliable over the years. However, clarity of content has emerged as a limiting factor in the citizen experience. Content is often comprehensive and technically accurate, but difficult to read. This shows up most when citizens try to understand eligibility or requirements for schemes, visas, or policies. In many cases, people either spend a long time trying to make sense of the information or give up and contact the agency to get their answers.

Only 13% of public-facing content meets lower secondary reading levels

Across five key government sites, only 13% of content is written at lower secondary level or below. The median reading level is Pre-University / JC, which exceeds the completed education level of 36% of Singapore residents (based on 2024 data) and is therefore likely challenging for this group.

Unclear content has real costs

When information is hard to understand, people reach out to agencies more often, increasing enquiry volume and operational load. At the same time, unclear content leads to poor and inconsistent understanding of policies, resulting in uneven outcomes.

What was delivered during the hackathon

A tool that checks content against readability standards and creates clear, plain-language versions.

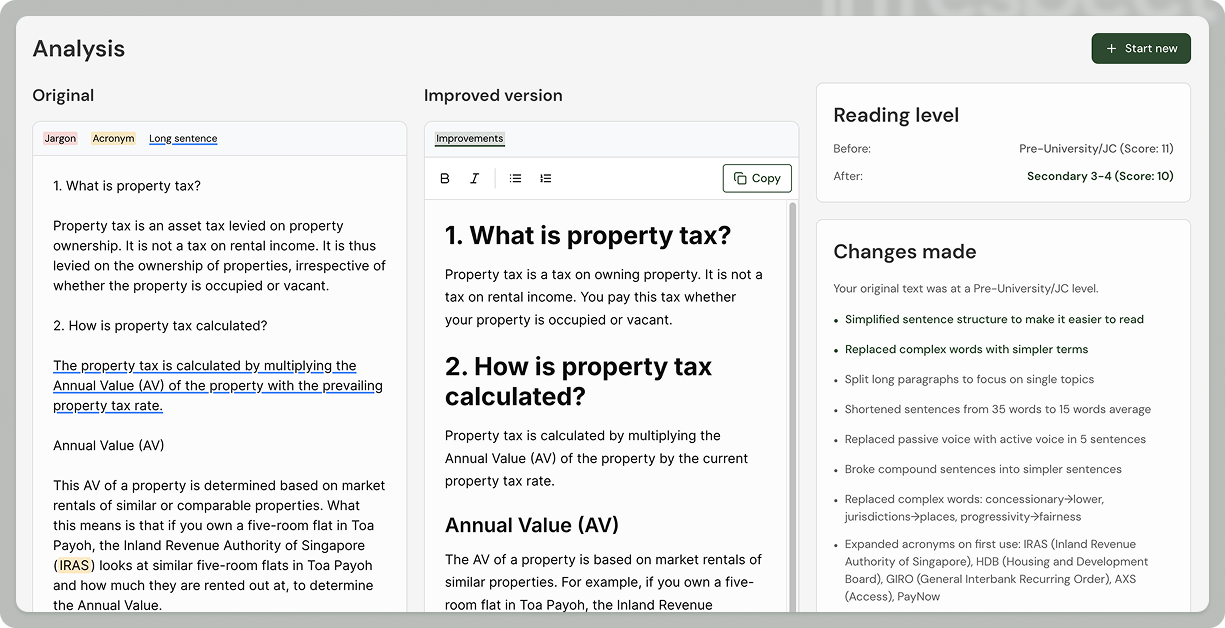

In January, we built and tested a SpeakEasy prototype. The tool can:

Check readability of pasted content

Find unexplained acronyms

Identify jargon

Create AI-assisted rewrites

Tailored to target reading level based on WCAG (Web Content Accessibility Guidelines)

Show side-by-side comparisons of original and rewritten content

Summarise changes

This tool gives content writers a shared, standardised way to check readability and make specific improvements, rather than guessing or using different online tools.

The challenge of maintaining policy intent while simplifying

In January, we tested SpeakEasy with content writers from 3 agencies through 45-minute usability sessions. We reviewed real, publicly available pages and used the prototype to assess readability and suggest improvements. In multiple cases, sections of content dropped from university-level reading difficulty to Secondary 3–4, while preserving the original meaning and intent.

Testing with three agencies surfaced a key issue. Automated rewrites can make legally precise or policy-specific terms too simple. Agencies are willing to accept some complexity to keep policy intent and accuracy. This showed the need for a list of approved terms and clear explanations of changes. We added side-by-side comparisons and change summaries to help human review. These trials showed that readability scoring is useful for finding and fixing issues, but human judgment is still needed is still needed to review edits, preserve policy intent, and bring content to a publishable state.

Signals from agencies and partners

“Having a score helps people to improve”

Participants consistently found value in seeing concrete scores and highlighted passages, rather than receiving general writing advice. One content writer shared, “We already try to write plainly, but we’ve never had a way to measure it.”

If it’s just a guideline, nobody will really follow it. Having a score helps people improve. Different tools give different results. It’s very useful if everyone uses the same calculator.— UX agency supporting a statutory board website

Another reflected that readability is especially important for policy content: “It’s a very interesting tool for policy folks — there’s no point communicating something if no one understands it.”

These sessions showed that readability scoring, with clear explanations and human review, can make content issues clear and fixable in ways that existing guidance cannot.

Plugging into the ecosystem

From a governance perspective, we have also engaged GovTech’s Usability and Accessibility team. They signalled willingness to operationalise readability by incorporating readability measurement has part of Digital Service Standards control implementation and into their Digital service awards. These signals position SpeakEasy as a practical enabler that can integrate into existing platforms (e.g. Isomer, WOGAA) and governance systems.

Try it out today at https://speakeasy.hack.gov.sg/

If you would like to explore how this could support your content work, indicate your interest with this form.