Ref.AI

30 January 2026

Turning clinical notes into referral-ready drafts, so clinicians spend less time on forms and more time on patient care.

Opportunity

The Problem

Referrals are a critical part of the patient journey, but today they often require clinicians to manually copy information from multiple systems into long, repetitive forms.

This creates several challenges:

Time wasted on duplicative data entry

Increased burden on receiving institutions during triage

Costly referral rejections and delays

Every extra minute spent on paperwork is a minute taken away from clinical decision-making and patient interaction. Delays in referrals also directly affect patient outcomes.

Validating the Need

To ensure Ref.AI addresses a real and urgent problem, we spoke directly with clinicians who create referrals daily.

We reached out to:

General Practitioners using the RefX referral platform

Doctors using BRIGHT for long-term care referrals

Through targeted workflow walkthroughs and usability sessions, we explored how referrals are actually created in practice.

Two key insights emerged:

1. Repetitive Data Entry (RefX): Clinicians are forced into repetitive data entry. Doctors report having to type identical patient context information multiple times across different systems and forms, creating unnecessary administrative burden that detracts from patient care.

2. Information Overload leading to inefficiencies (BRIGHT): When constrained by tight schedules, healthcare professionals filling referrals sometimes resort to copying and pasting lengthy blocks of patient information without proper curation. This practice creates referrals with excessive, unorganised information that increases reviewer cognitive load, and potentially extends turnaround times through additional clarification requests from service providers and referral creators.

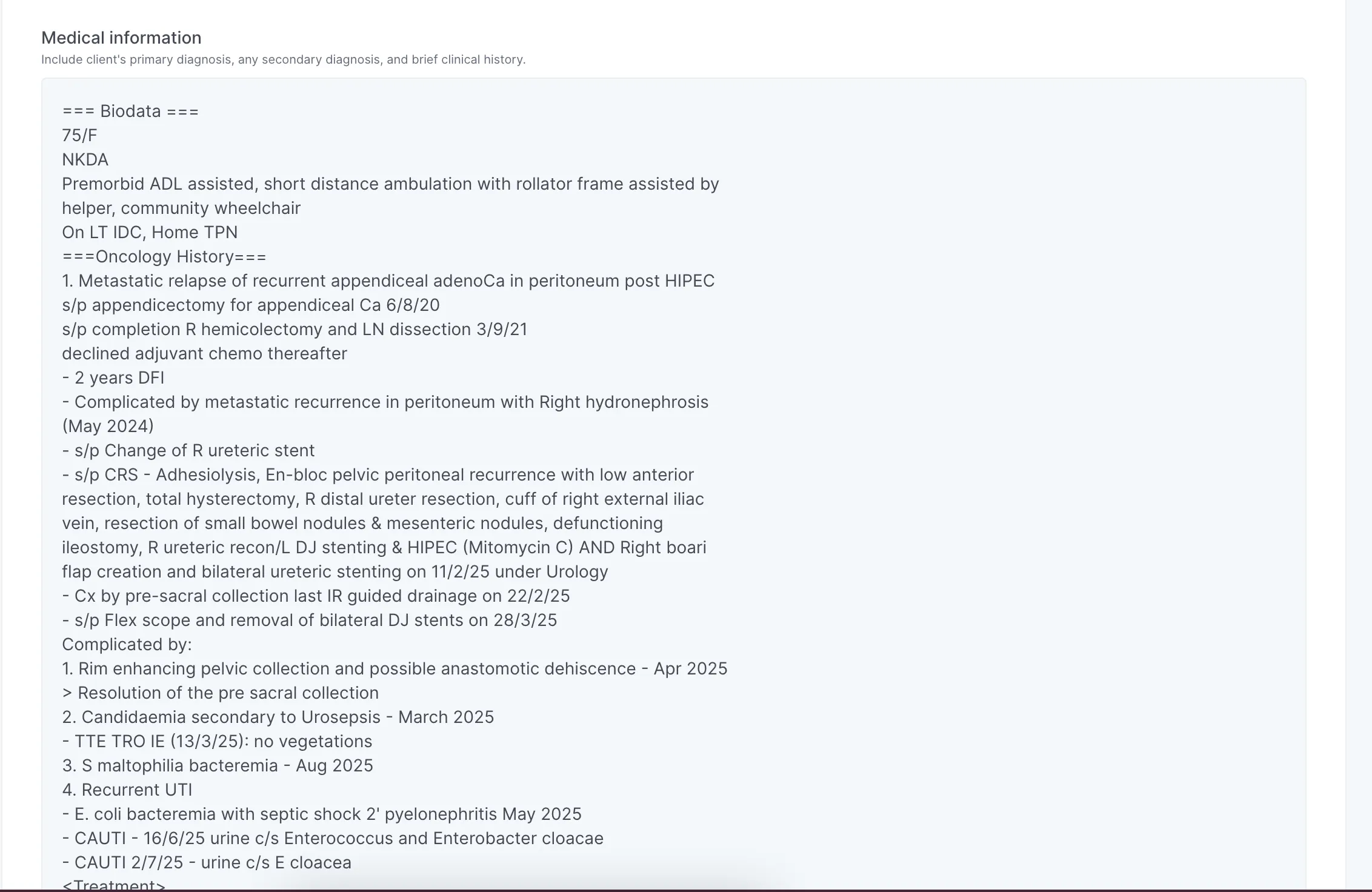

This is an example using mock data of how medical reports are filled up with copy pasted data on Bright.

Velocity

The Solution

We built an early working prototype demonstrating how Ref.AI works with clinicians. Our prototype uses an LLM with a prompt engineered specifically for clinical extraction tasks. The prompt is designed to return structured output which is then validated against the form schema to ensure correctness. The output is subsequently mapped into the referral form sections.

With one click, the system ingests clinical notes and extracts key referral fields (reason for referral, history, medications, diagnoses), generates a structured output aligned with the referral template, and auto-populates the form for clinician review and submission.

.png)

CHAS form pre-filling in RefX using mock data

Pasting discharge summary on Bright

Suggested answers for Bright referral questions

Testing with Users

Taking the doctors’ anonymized test data, we ran it through our prompt and generated the outputs live with our users. In each session, we walked clinicians through the drafted referral output and gathered feedback on usability, completeness, and editing effort.

Based on live input, we iterated quickly - refining prompts to better match clinician expectations. This helped validate both feasibility and workflow fit.

Traction

We tested Ref.AI in BRIGHT and RefX with real users in UAT environments.

Early Impact

Initial responses from clinicians were positive across both platforms we tested.

For RefX, 2 GPs reported that simpler case drafts "looked great" and could be used immediately without edits. For more complex cases, one GP estimated that edits would take around five minutes or less, compared to 7–10 minutes to manually complete a referral form.

For Bright, the feature received a 4 out of 5 rating for both accuracy and usefulness, with the clinician we interviewed finding the summaries "not too bad" and "understandable as a clinician”. For complex cases with lengthy discharge summaries, the doctor noted that whilst the AI summaries weren't perfect, they were "quite good" - comparable to summaries written by medical professionals under time pressure and superior to the raw pasted discharge summaries that some doctors currently use.

They also particularly valued Ref.AI's ability to provide structured, concise summaries, which would help reduce cognitive load for those on the receiving end of referrals.

Most importantly, the feature was also seen as a significant improvement over the current "retrieve info from EMR" function, which users have described previously as "not usable" due to incomplete and unstructured information.

"Going through patient information is time consuming. This would save me time.”

its a very good use case ... hope it eventually gets scaled!

Looking Ahead

On BRIGHT’s end, we are keen to explore if the feature can be integrated and serve as an alternate way to fill up the medical report, potentially expanding to other parts of the referral form.

On RefX's end, we intend to explore using existing integrations with GP’s Clinic Management Systems (CMS) to automate the CHAS form filling experience. If there's a good product fit, we’ll roll it out to all referral forms to further make the referral creation process more efficient for end users.

We also intend to build Ref.AI as a more scalable, out-of-box solution that works across different medical forms and form schemas. We intend to do this by standardising schema representation, and allowing prompts to be fine-tuned for better results despite differences in form structures. Where necessary, we will provide customisation options for specific workflows through configurable context and prompts beyond the standard setup.