Open47

3 February 2026

Uses AI to reviews code for security issues in 5 minutes

Opportunity — What problem are you working on, and why it matters

Imagine the consequences: If go.gov.sg is compromised, attackers could turn trusted government links into phishing traps. Or if an Isomer site is breached, users could edit official content to mislead the public, spread false information, or run scams.

Real risks exist. In 2025 alone, insecure code triggered 12+ security incidents and near-misses across OGP, with recurring issues like inadequet access checks.

Current approach falls short. Annual security testing means problems can go undetected for up to 12 months. Manual code reviews by experts are slow, can’t keep up with 80+ engineers, and often miss subtle risks.

Open47 sees everything. It automatically analyses how website code works together, checks existing security measures, and confirms whether security gaps could actually be exploited by attackers — reducing false alarms while catching real threats every time developers submit new code.

Fast, cheap, effective. Security reviews cost about $1 and finish in under 10 minutes, giving government teams instant security feedback instead of waiting months for annual tests.

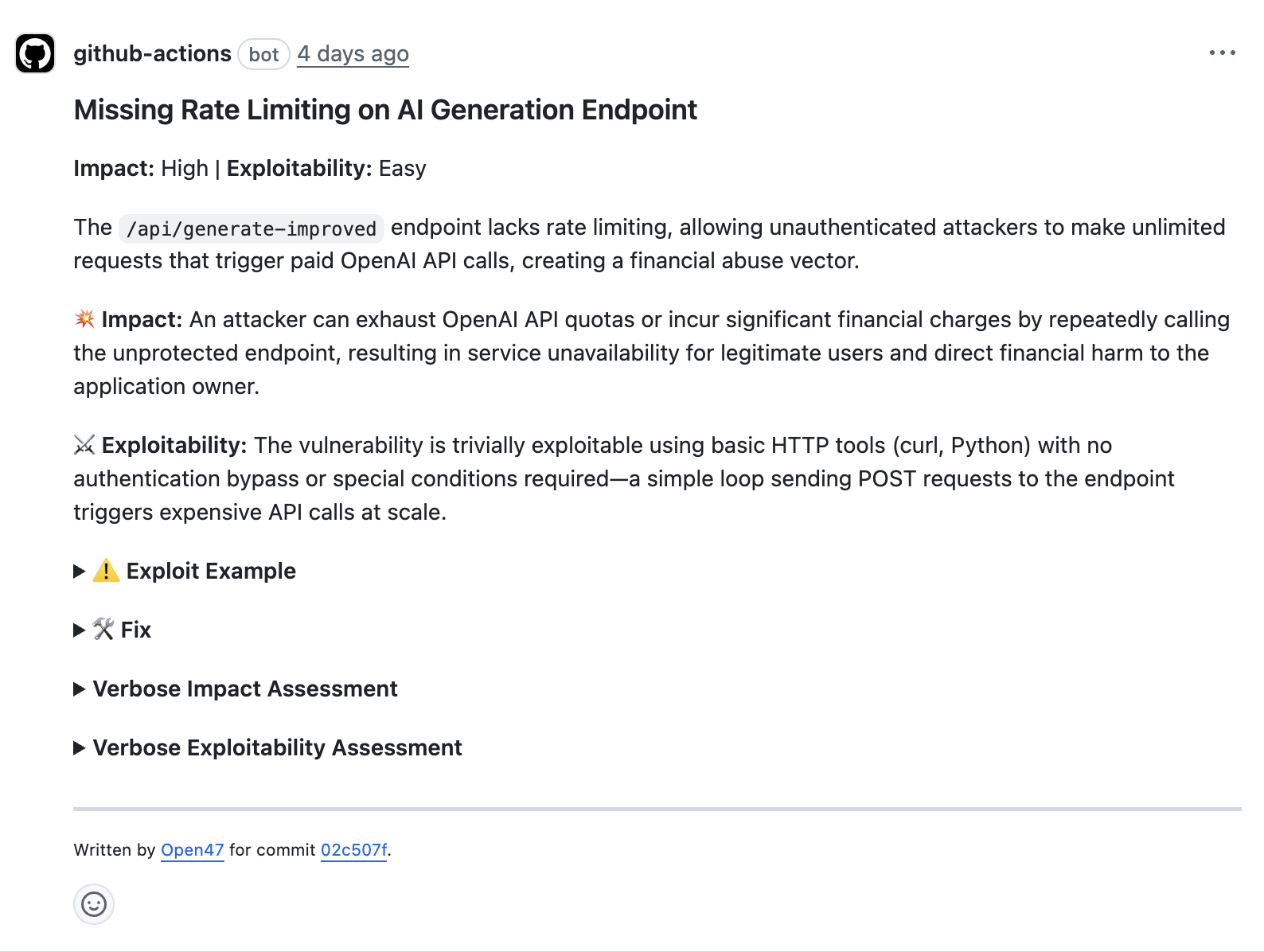

Example of a GitHub comment left by Open47 about an issue where part of the system is open to anyone on the internet with no login or limits. If someone misuses it, they can run it repeatedly, causing high costs and slowing or breaking the service for real users.

Velocity — What you actually built or changed in the last month

What users can do now: Government developers can now get their software checked for security problems in under 10 minutes, instead of waiting up to 12 months for the annual security review. Open47 automatically spots security weaknesses that could put citizen data at risk - problems that developers might not even know to look for.

We learned from our mistakes: Our first version (v1) had a major flaw - it only looked at new code changes, missing the bigger picture. It kept flagging problems that weren't actually dangerous because other parts of the system were already protecting against them. This meant developers got too many false alarms and started ignoring the tool.

I might have to turn it off — the false positives are too high.— One frustrated user of V1

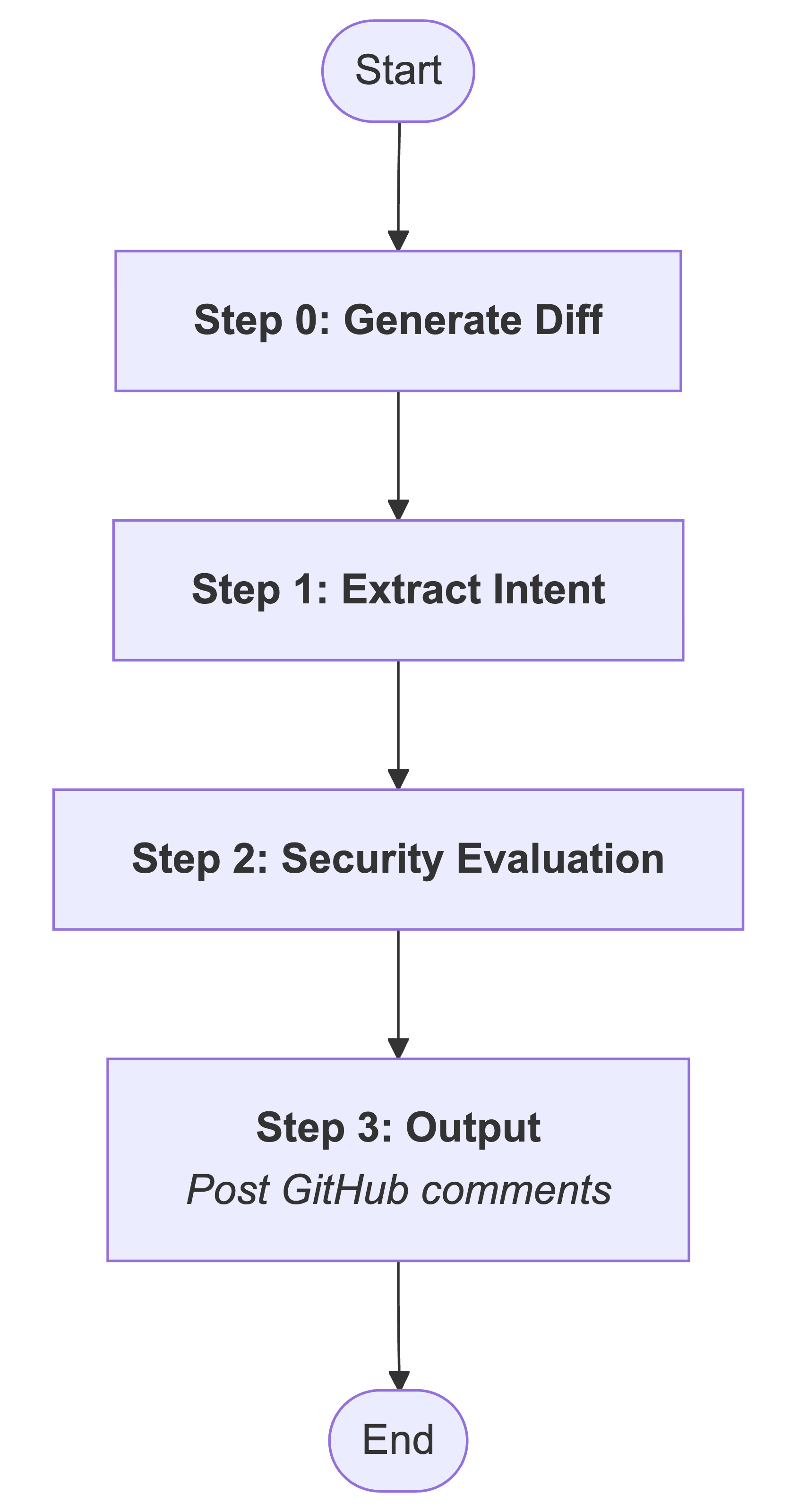

This shows how V1 worked - it only looked at new code changes, which meant it missed important security protections already in place elsewhere.

Our new approach focuses on the full picture: We completely rebuilt Open47 to examine all the code in a project before reporting problems. Instead of just looking at one piece in isolation, it now understands how different parts of the software work together to decide if something is actually a security risk.

Key improvements: The tool now runs multiple security checks to catch more real problems, handles large code changes more reliably by breaking them into smaller pieces, and double-checks its findings against the entire project before alerting developers.

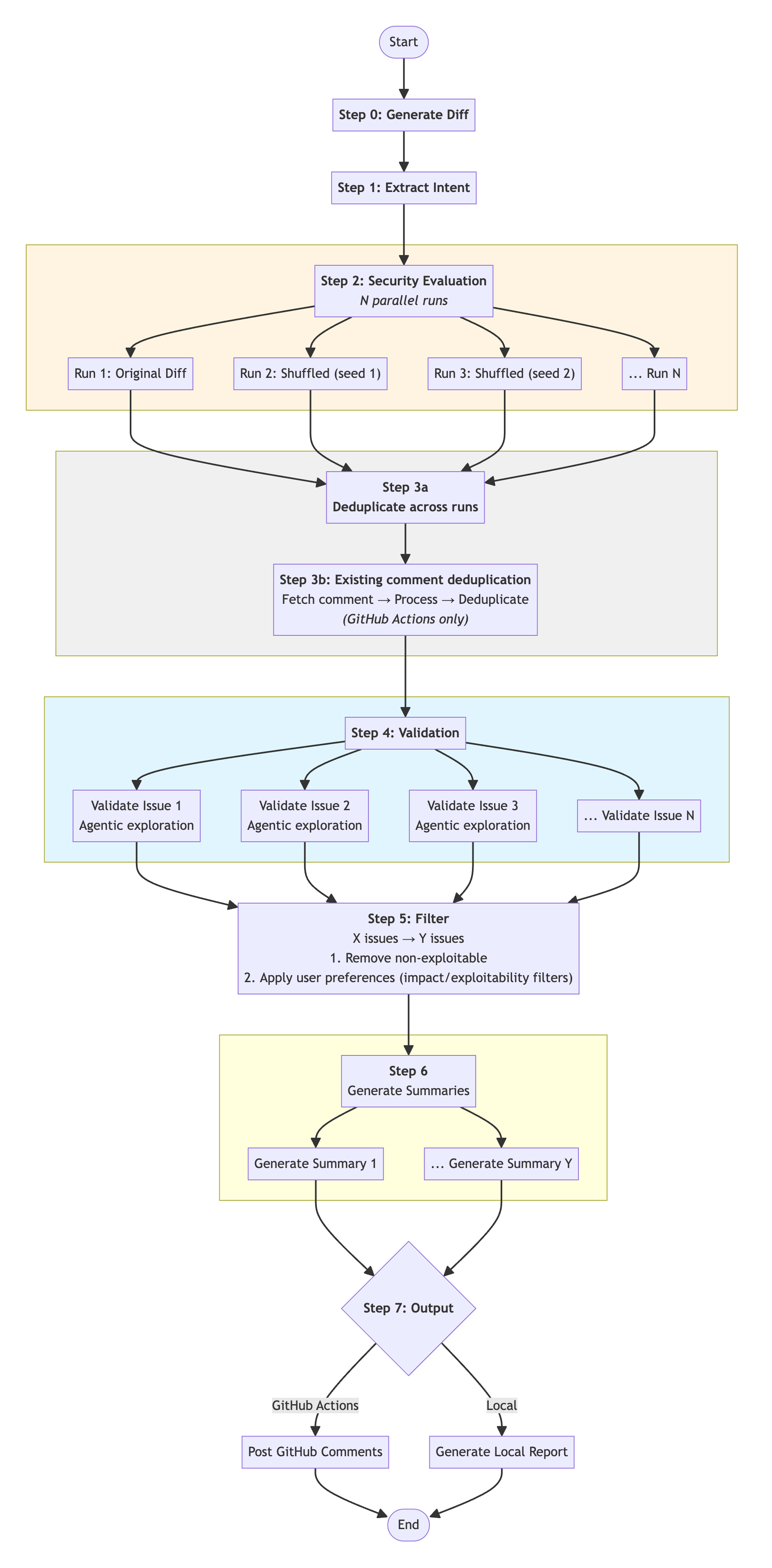

This shows our improved V2 - it now examines the entire codebase to understand how different parts work together before flagging security issues.

What we learned: It's better to miss some problems than to cry wolf too often. When security tools give too many false alarms, people stop trusting them. We'd rather flag fewer issues that teams will actually fix than overwhelm them with noise.

Traction — How real people are using it, and what is happening as a result

Example of an engineer fixing security issue surfaced by Open47, where the app was trusting files it got from the internet and could be tricked by a sneaky file. If left unfixed, someone could steal information, crash the app, or make it do things it shouldn’t.

Real usage across hackathon teams: We've reviewed several major hackathon projects including Keypress, Polyglot, Confetti, and Insight, finding over 30 security problems that could have exposed user data or allowed unauthorised access to systems.

Teams see immediate value and are taking action: Development teams have acknowledged our findings and fixed many issues based on our recommendations, including problems where user data wasn't properly protected, systems didn't check user inputs safely, and sensitive files were accidentally left exposed online.

very nice, wish i had this before doing code review lol— Azer (Confetti)

I'm fixing some of the advisories by Open47 … these are really cool— Eliot (Keypress)

good catch, will fix in a subsequent PR— Shyam (Playtime)

Significant time and cost savings: Most security reviews now cost around $1 to run. Security checks that used to take developers a full working day can now be completed much faster.

Examples of cost and time taken for large code changes

Project | Lines of code | Time taken | Cost |

|---|---|---|---|

Confetti | ~14,000 | 16 minutes | ~$6.20 |

Polyglot | ~12,000 | 33 minutes | ~$7.70 |

Next steps and current limitations: We're planning to test this on live government systems with security engineers involved, add rules specific to different teams, and expand beyond reviewing single projects at a time. Currently we're limited by expensive AI processing costs and have only tested on projects with up to 12,000 lines of changed code.

Hello from the only engineer hacking on Open47— @adriangohjw